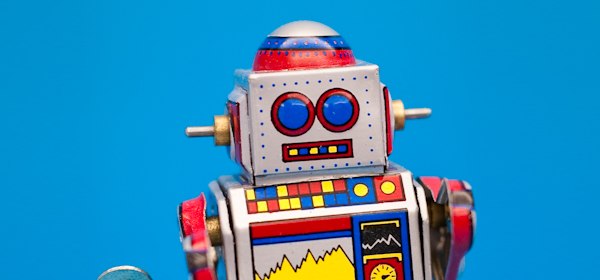

Let’s get one thing clear. Robots.txt isn’t just a fancy file for webmaster-purists and professional SEOs. In fact, every WordPress developer should know a thing or two about the file and why it’s so important for every blog’s SEO.

So first, here’s the big question:

What is robots.txt and why is it important?

Speaking as the captain obvious: it’s simply a file. But there is one interesting thing about it. It isn’t displayed to the actual visitors anywhere on the blog itself.

Instead, it sits in the root directory of the blog and serves only one purpose. It is the file that search engines look at before they start crawling the contents of a blog. And the reason for looking at it is to find information on what they should and shouldn’t be crawling.

So in essence, by using this file you can inform search engines what you want them to index and rank, and what you DON’T want them to index and rank.

The truth is that not every page (or area) of a blog is worth ranking. As a webmaster or a person working with WordPress you have to be able to identify those areas and use robots.txt as a place where you can speak to search engines directly, and let them know what’s going on.

Creating robots.txt for WordPress

First of all, let me tackle the actual guidelines which you can find at codex.wordpress.org – this page in particular: Robots.txt Optimization. There’s an example file. Here’s the thing … don’t use it as a template!

I’m not saying that it’s completely bad, but it can create a lot of problems for some WP blogs. It all depends on your settings. Things like permalinks, category and tag bases. That’s why you need to create robots.txt for each individual blog and be careful when you’re dealing with a template of any kind.

Things you should always block

There are some parts of every WP blog that should always be blocked: the “cgi-bin” directory and the standard WP directories.

The “cgi-bin” directory is present on every web server, and it’s the place where CGI scripts can be installed and then ran. Nowadays, some servers don’t even allow access to this directory, but it surely won’t do you any harm to include it in the Disallow directives inside the robots.txt file.

There are 3 standard WP directories (wp-admin, wp-content, wp-includes). You should block them because, essentially, there’s nothing there that search engines might consider being interesting.

But there’s one exception. The wp-content directory has a subdirectory called “uploads”. It’s the place where everything you upload using WP media upload feature gets put. The standard approach here is to leave it unblocked.

Here are the directives to get the above done:

Disallow: /cgi-bin/

Disallow: /wp-admin/

Disallow: /wp-includes/

Disallow: /wp-content/plugins/

Disallow: /wp-content/cache/

Disallow: /wp-content/themes/

Allow: /wp-content/uploads/

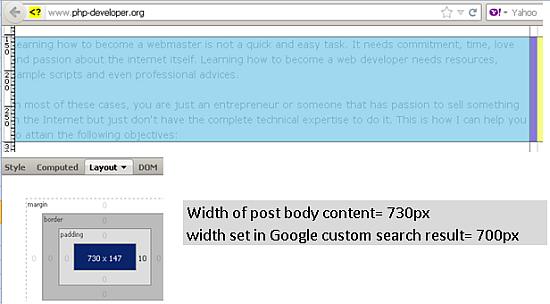

Notice the small difference between the template at WP codex. They tell you to block “/wp-admin” (without the trailing “/” character). This can be problematic if you have your permalinks set to “/%postname%/” only. In this case every post with a slug beginning with “wp-admin-“ won’t get indexed.

I know that there’s only a small group of bloggers that could have created such posts (the “blogging about WordPress” group), but as a WP developer you can’t make any assumptions about what’s going to happen on the blog you’re working on after it takes off. That’s why it’s better to remember about the trailing “/” character here.

Things to block depending on your WP configuration

Every blog has a set of settings that are unique and need to be handled individually when creating the robots.txt file.

First thing is whether the blog uses categories or tags to structure the content … or both… or none.

In case you’re using categories to structure your blog make sure that tag archives are blocked from search engines. To get it done first check what’s the “tag base” for tag archives (Admin panel > Settings > Permalinks). If the field is blank then the base is “tag”. Use this base and place it in a Disallow directive:

Disallow: /tag/

In case you’re using tags to structure your blog make sure that category archives are blocked from search engines. Again, check the category base in the same place and then block it:

Disallow: /category/

In case you’re using both categories and tags then don’t do anything here.

In case you’re using neither categories nor tags then block both of them by using their bases:

Disallow: /tag/

Disallow: /category/

Why should you bother? An honest question. The main reason here is the duplicate content issue. For example, if you’re not using categories then your category archive looks exactly the same as your home page, i.e. there are two sites that are exactly the same but have different URLs:

yourdomain.com/

yourdomain.com/category/uncategorized

I’m sure I don’t need to explain why that’s bad. You have to make sure that such situation doesn’t happen.

Next up is the authors’ archive. If you’re dealing with a single author blog then there’s no point in keeping the authors’ archive available to the search engines. It creates the same duplicate content issue as the tag-category thing. You can block author’s archive by using:

Disallow: /author/

Files to block separately

WordPress uses a number of different files to display the content. Most of these don’t need to be accessible via the search engines.

The list most often includes: PHP files, JS files, INC files, CSS files. You can block them by using:

Disallow: /index.php # separate directive for the main script file of WP

Disallow: /*.php$

Disallow: /*.js$

Disallow: /*.inc$

Disallow: /*.css$

(The “$” character matches the end of an URL string.)

However, be careful with this. It’s not advised to block any other files (images, text files, etc.). That’s because even if such a file is not placed in the uploads directory you probably still want it to be recognized by the search engines.

Note. If you used the “Allow: /wp-content/uploads/” line earlier on, then all PHP, JS, INC, and CSS files that are inside the uploads directory would still be visible to the search engines – nature of the Allow directive.

Things not to block

The final choice is of course up to you, but I would not block any images from Google image search. It can be done by a separate record:

User-agent: Googlebot-Image

Disallow:

Allow: / # not a standard use of this directive but Google prefers it this way here

Another robot to handle individually would by the Google AdSense robot, of course, only when you are a part of their program. In this case you need to make sure that it can see all the pages that your users can see. The easies way of doing this is by using a very similar record:

User-agent: Mediapartners-Google

Disallow:

Allow: /

Of course, the issue doesn’t end with just these two examples. There are probably many more of them because every blog is different. Feel free to comment and point out some additional areas of a WP blog that shouldn’t be blocked.

How to handle duplicate content

No matter what you do your blog will always have some duplicate content. It’s just how WP is constructed, you can’t really prevent it. But you can still use robots.txt to prevent search engines from accessing it.

There’s a number of duplicate content areas on every blog, for instance:

Search results

This is what a search result page URL usually looks like for a WP blog:

yourdomain.com/?s=phrase

(Sometimes there’re also some additional parameters after the search phrase.)

This is both duplicate content and content generated automatically – something Google really doesn’t like. That’s why it’s good to block this by using:

Disallow: /*?

Apart from blocking the search results this directive blocks access to all URLs that include a question mark, but this shouldn’t cause any problems when it comes to WordPress.

Trackback URLs

Some blogs use trackback URLs that are essentially duplicate content of the original post. Here’s an example of a normal post’s URL and its trackback URL:

yourdomain.com/some-post/

yourdomain.com/some-post/trackback/

To prevent search engines from accessing such content you can use:

Disallow: /trackback/

Disallow: */trackback/

Now why the duplicate statements? The fact is that the implementation of the Robot Exclusion Standard can vary for different robots. By using these two lines you can be sure that it’s understandable for all of them.

RSS feeds

RSS feeds are just another example of content that’s purely duplicate. You can eliminate it from search engines by using:

Disallow: /feed/

Disallow: */feed/

Date-based archives

Very similar to RSS feeds, only date-based archives create a lot more duplicate content. Let me give you an example. Let’s say that today is Jan 2nd, 2012, and you’ve published a post. If we only look at the date-based URLs then this post can be accessed via:

yourdomain.com/2012/

yourdomain.com/2012/01/

yourdomain.com/2012/01/02/

That’s a lot of duplicate content. You can eliminate it by using:

# the year your blog was born

Disallow: /2009/

Disallow: /2010/

Disallow: /2011/

Disallow: /2012/

# and so on

Now, what’s important here. Why all the separate directives instead of just using one “Disallow: /20*/$” which would work too? If you were to use such a directive you’d block every post which slug begins with “20”. And such posts are easy to imagine, for example “20-reasons-why-something”, “20-tips-for-someone”.

Using separate directive for each ear of your blog’s existence is the safest way to block only the date-based archives and nothing else.

Other possible duplicate content

There are three things I was talking about earlier – categories, tags, and author archives. This is all duplicate content, so make sure to take care of that.

Additionally, some plugins create even more duplicate content. Whenever you’re installing a plugin make sure to check whether it creates any additional URLs.

I can give you one example – the WP-Print plugin. It’s a very cool plugin and I highly encourage you to use it. It creates a printer-friendly version of your posts and pages. The only downside are the additional URLs. For example, here’s a normal post and its printer-friendly version:

yourdomain.com/some-post/

yourdomain.com/some-post/print/

Classic duplicate content. Make sure to exclude it by using:

Disallow: /print/

Disallow: */print/

How to actually edit the file

There are two main ways of doing this: you can either use a plugin or handle the file manually.

The plugin’s called Robots Meta. And it does a lot more than just letting you create the robots.txt file. I really advise you to get familiar with it. Setting it the right way can really give you an SEO-advantage.

If you want to do it manually then just create a robots.txt file in your notepad and upload it to the blog’s root directory using FTP. The file needs to be accessible via this URL:

yourdomain.com/robots.txt

Once you have it set up you can test it in Google Webmaster Tools (learn what is Google Webmaster Tools and how to use it here.)

The final template you can use

I know, I told you not to use templates, but we’ve been talking about many various directives here, so I think it’d be nice to give you a template which you can take as a starting point when working on your robots.txt.

Again, it’s probably not a template you can just plainly copy onto your blog without any adjustments.

User-agent: *

Disallow: /cgi-bin/

Disallow: /wp-admin/

Disallow: /wp-includes/

Disallow: /wp-content/plugins/

Disallow: /wp-content/cache/

Disallow: /wp-content/themes/

Allow: /wp-content/uploads/

# Disallow: /tag/ # uncomment if you’re not using tags

# Disallow: /category/ # uncomment if you’re not using categories

# Disallow: /author/ # uncomment for single user blogs

Disallow: /feed/

Disallow: /trackback/

# Disallow: /print/ # wp-print block

Disallow: /2009/ # the year your blog was born

Disallow: /2010/

Disallow: /2011/

Disallow: /2012/ # and so on

Disallow: /index.php # separate directive for the main script file of WP

Disallow: /*? # search results

Disallow: /*.php$

Disallow: /*.js$

Disallow: /*.inc$

Disallow: /*.css$

Disallow: */feed/

Disallow: */trackback/

# Disallow: */print/User-agent: Googlebot-Image

Disallow:

Allow: /User-agent: Mediapartners-Google

Disallow:

Allow: /Sitemap: http://yourdomain.com/sitemap.xml

Here it is. A complete guide to creating a WordPress friendly robots.txt file. I hope you enjoy the information. Feel free to comment and tell me what you think. Also, let me know in case I’ve forgotten about anything.

And finally, did you spend a significant amount of time when creating the robots.txt file for your blog?